Engineering the Compute Foundation for Hyperscale

How Brightwave provisions hundreds of secure, isolated compute environments that scale on demand.

Isolation as Infrastructure

When thousands of agents are executing LLM-generated code in parallel — installing packages, making network requests, writing to disk — on behalf of multiple enterprise customers sharing the same cloud hardware, the isolation boundary between those environments is the most consequential piece of infrastructure in the entire system. If it fails, nothing else matters.

Agent isolation in the broader ecosystem exists on a spectrum, and the industry has been moving steadily to increasingly sophisticated security and compute models. Early orchestration frameworks ran agents as co-routines within a single process — shared memory, filesystem, and failure modes. Modern harnesses have moved well beyond this: Claude Code sandboxes command execution behind OS-level primitives like Seatbelt and bubblewrap; OpenClaw and similar frameworks support configurable container isolation with backends ranging from the host machine to remote VMs. These are genuine advances that make single-agent and small-team workflows safe and practical. But our requirements are different — thousands of concurrent agents from different tenants on shared physical machines, each running arbitrary code for extended periods.

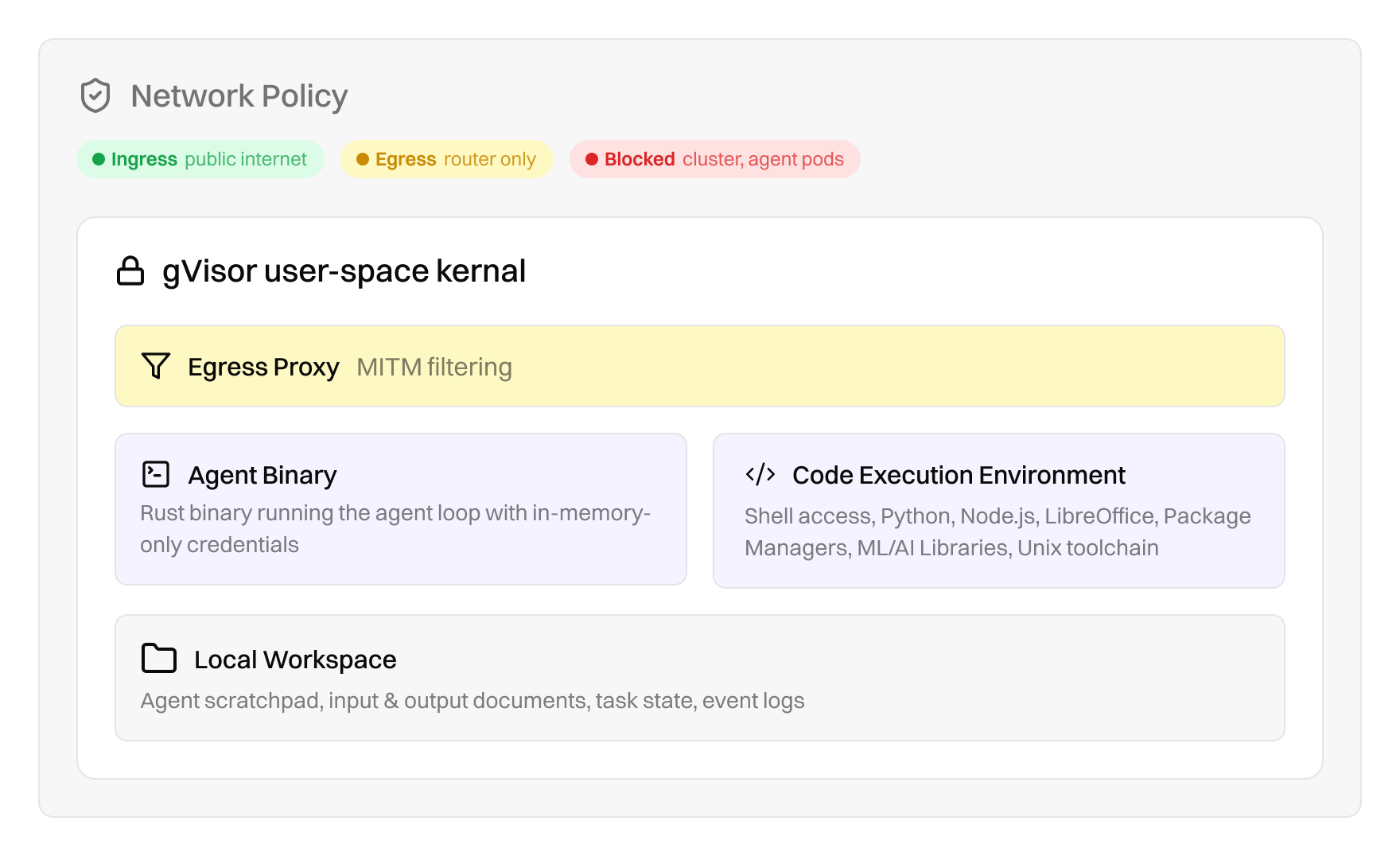

Each agent in our system gets a dedicated compute environment with its own filesystem, process space, and network stack, running under gVisor, a user-space kernel that intercepts every system call without ever exposing the host kernel to agent-generated code. In the rest of this post, we'll walk through the architectural decisions behind this foundation.

Why Containers Aren't Enough

The default container isolation model — Linux namespaces and cgroups — was designed to run trusted application workloads. It provides resource limits and filesystem separation, but all containers on a host share the same kernel. This is fine when you control the code running inside. It is not fine when the code is being written in real time by a language model that may probe system boundaries in unexpected ways.

What's more, container escape vulnerabilities are a well-documented, regularly exploited attack surface. When you run LLM-generated code inside a standard container, you are trusting that the model will never produce a sequence of system calls that exploits a kernel vulnerability. That is not a bet we are willing to make when running thousands of concurrent agents on behalf of enterprise customers.

Our agents run under a user-space kernel that intercepts every system call from the agent process and handles it in an isolated runtime. The host kernel is never exposed to agent-generated code. This is the same class of isolation technology that major cloud providers use to run untrusted workloads in their serverless and managed compute offerings. The overhead is measurable but modest, but we insist on deterministic security guarantees.

The Agent Binary

Isolated by gVisor, each agent runs a single, statically-linked Rust binary. This binary does two things: it runs the agent loop — the cycle of reasoning, tool execution, and observation that constitutes the agent's work — and it exposes a local HTTP API that the orchestration layer uses to initialize, monitor, and communicate with the agent.

The container image that hosts this binary includes a complete working environment: a Python runtime, document processing capabilities, AI/ML libraries, a charting engine, and the standard Unix toolchain. The image is built once and reused for every agent — there's no per-agent setup time beyond pulling the image (which is cached on warm nodes).

The choice of Rust for the agent binary is deliberate. Memory safety, zero-cost abstractions, and the ability to compile to a single static binary that runs on any Linux environment without dependency resolution. The binary starts in milliseconds. There is no interpreter, no runtime, no garbage collector between the agent and the machine.

Credential Security & Network Isolation

Because environment variables are the first place any agent — or any attacker — looks for secrets, credentials are never written to disk. They are never set as environment variables. They are never baked into the container image. Instead, when an agent is initialized, the orchestration layer transmits credentials over an encrypted channel to the agent binary, which holds them exclusively in process memory. The agent loop itself has no mechanism to access raw credentials — it makes API calls through an internal client that attaches authentication transparently. The credentials exist only in memory, inside a compiled binary running under kernel-level isolation.

Even if an agent's generated code attempts to enumerate environment variables, scan the filesystem, or inspect its own process — which adversarial prompt injection might attempt — there is nothing to find. The credentials are architecturally invisible to the code execution environment.

What's more, every agent pod runs a Rust-based MITM sidecar proxy that intercepts all outbound HTTPS traffic. For configured domains, it terminates TLS with an ephemeral certificate signed by a per-pod CA, inspects the request, and swaps dummy API keys for real credentials before re-encrypting and forwarding upstream. The agent code, and any code the agent generates, never sees a real API key.

Scaling to Hundreds in Seconds

The promise of agents at this scale requires the infrastructure to actually scale.

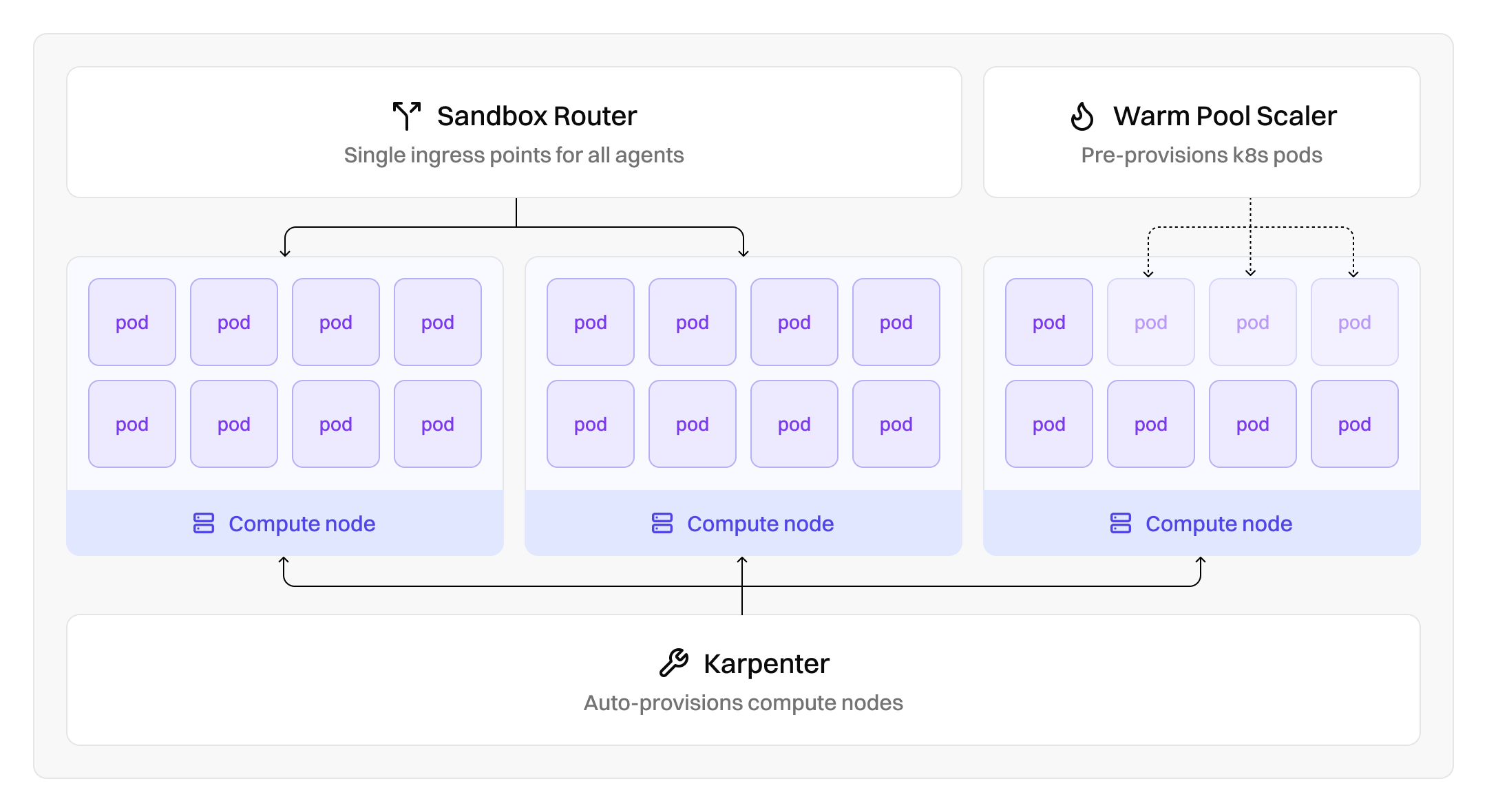

We run on Kubernetes with Karpenter as the node provisioner. When agents are scheduled and the cluster lacks capacity, Karpenter provisions new nodes — typically spot instances from the cloud provider — in seconds. The agents themselves have a lightweight resource footprint, which means dozens of agents can pack onto a single node.

To eliminate cold-start latency for the first wave of agents in a task, we maintain warm pools — pre-provisioned environments that sit idle and ready to accept initialization. When a large-scale task begins, the first batch of agents can start working within seconds. Subsequent batches provision fresh environments as Karpenter scales the underlying node pool.

When an agent finishes its work, its environment is terminated and its resources are released. There are no idle machines accumulating cost. The compute exists for exactly as long as the work requires, and then it disappears.

So What

We built all of this — the kernel isolation, the in-memory credential model, the network segmentation, the auto-scaling node pools, the checkpoint-resume system — so that when a user asks a question that requires deep, parallel research across hundreds of sources, we can provide scalable on-demand compute with enterprise-grade security guarantees.

The compute layer is the unglamorous foundation that makes everything above it possible. Secure isolation means agents can execute arbitrary code without risk. Auto-scaling means we don't pre-commit to a capacity ceiling. Ephemeral compute with resumability means we don't pay for idle resources or lose work to infrastructure failures.

In the next post, we'll go inside one of these environments and look at how a single agent organizes its work, manages its memory, and operates autonomously over extended time horizons.